hi guys,

like many of you, i’ve been holding off my build waiting for jan 2026, hoping the rtx 50-series launch would fix the market. instead, it looks like we just walked into a nightmare.

i’ve been crunching the numbers for a wan 2.2 (14b) rig, and honestly, the “buy local” route is looking financially stupid right now. wanted to check if my math makes sense or if i’m missing something.

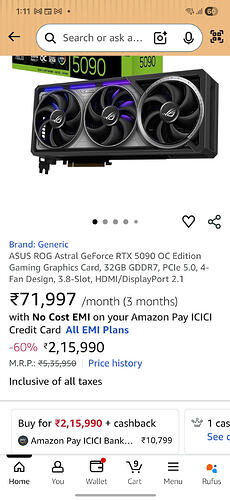

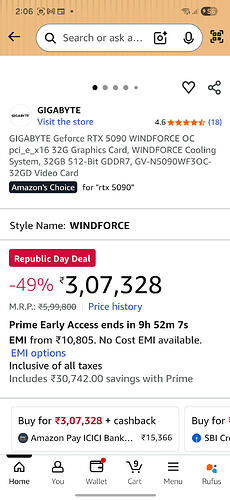

1. the 5090 scalping is a joke checked elitehubs and others—the 5090 is listed at ₹3.3L - ₹4L+ for the zotac/asus models. that’s not a consumer card anymore. even the used 4090s aren’t dropping because nobody can afford the upgrade.

2. the 4090 trap i expected 4090 prices to drop but they are holding steady at ₹1.7L - ₹2L+. sellers know the 5090 is unaffordable, so they aren’t budging.

3. the “memory crisis” is real i thought the news about ram shortages was marketing hype, but looking at prices on vedant/mdcomputers, it’s brutal.

-

reports say ddr5 contract prices are up 50-60% in 2026 because of server ai demand.

-

a decent 96gb or 128gb ddr5 kit (which i need for model offloading) is costing a fortune now.

4. my math: build vs. cloud

option a: build local (dual 3090s or single 4090)

-

cost: ~₹2.9 Lakhs minimum (considering the inflated ram/ssd prices).

-

problem: wan 2.2 14b (moe) is heavy. even a 4090 struggles with the full model without quantization or system ram offloading.

option b: cloud (vast.ai / runpod) i checked current spot rates for jan:

-

rtx 3090: ~$0.13/hr (approx ₹11/hr).

-

rtx 4090: ~$0.29/hr (approx ₹25/hr).

conclusion: i’d have to render for 10,000+ hours (that’s >1 year of 24/7 usage) on a rented 4090 to match the cost of building a rig right now.

unless you have a jio fiber connection with terrible upload speeds (which is my only worry for uploading 5gb+ checkpoints), does it even make sense to build in 2026?

feels like renting a 48gb a6000 or just spamming 3090 or 4090 instances for ₹11/hr or ₹25/hr is the only way until this ram shortage blows over.

anyone else abandoning their build plans?